The Problem: AI search is replacing Google Search

As AI assistants become the default way people search for information, a new challenge is emerging: brand visibility in AI search.

When someone asks Google "What is Copper?", the search engine returns a mix of results, including Copper CRM. The user clicks, browses, and decides.

When someone asks ChatGPT the same question, they get a single answer. And if that answer is about the metal instead of the CRM software, the brand never had a chance.

We call this the Context Gap: the difference between how AI responds when given context ("What is Copper, the CRM?") versus when asked directly ("What is Copper?").

Users don't naturally provide context. They ask simple questions. And simple questions are where brands disappear from AI search results.

Run your free AI visibility report here.

The Experiment

We tested 5 well-funded tech brands with common English word names:

-

Honey: Coupon browser extension, acquired for $4B by PayPal

-

Heap: Product analytics platform, $110M+ raised

-

Pitch: Presentation software, $100M+ raised

-

Copper: CRM for Google Workspace, $90M+ raised

-

Grain: Meeting recording tool, $20M+ raised

We tested each brand with 3 prompt variants:

-

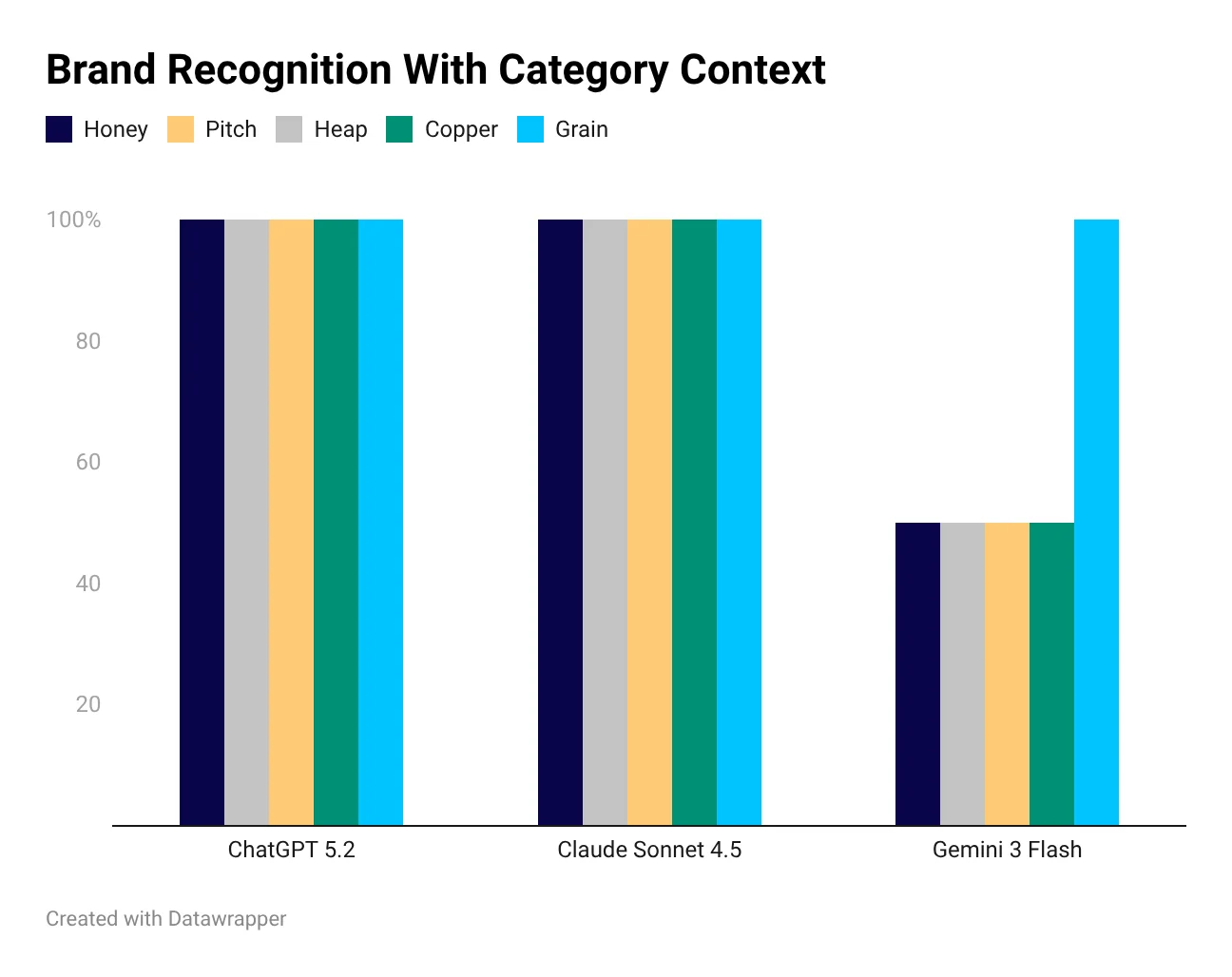

With Category: "What is [Brand], the [category]?" (e.g., "What is Copper, the CRM software?")

-

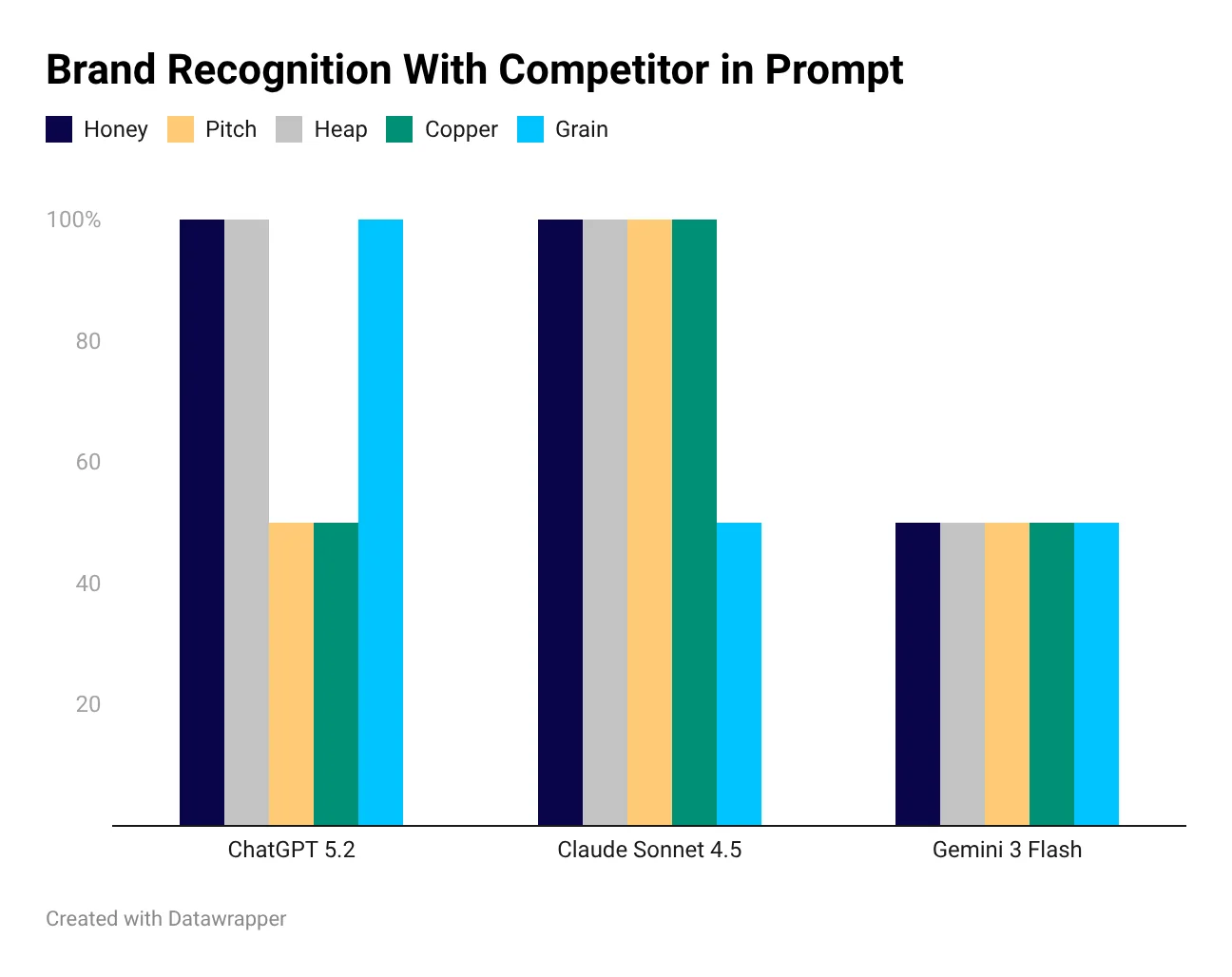

With Competitor: "Compare [Brand] vs [Competitor]" (e.g., "Compare Copper vs Streak")

-

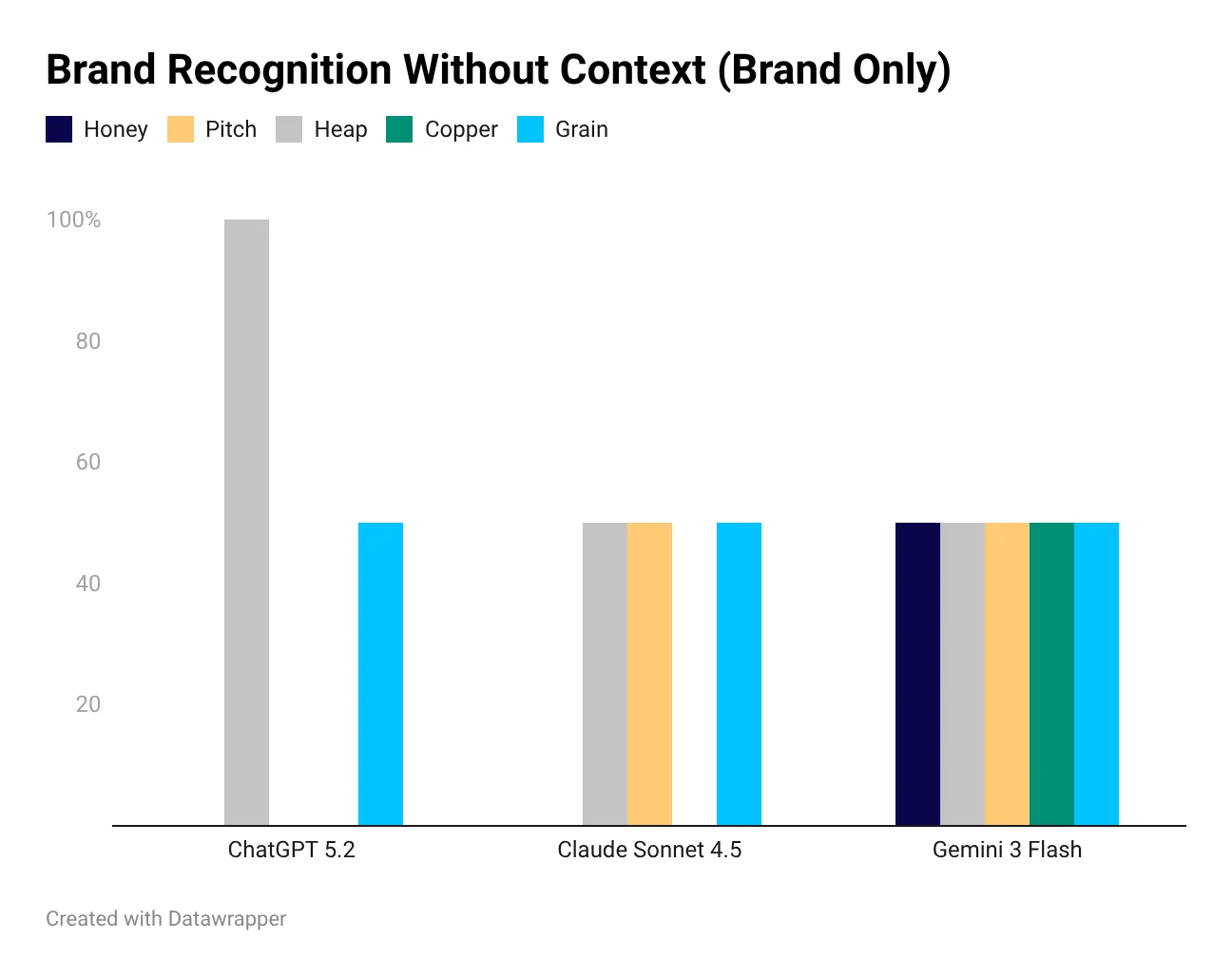

Alone: "What is [Brand]?" (no context)

Each variant was tested across 3 major AI models: ChatGPT (GPT-5.2), Claude (Anthropic) (Sonnet 4.5), and Gemini (3 Flash).

Scoring:

| Recognition score | What does it mean? |

|---|---|

| 100% | means the AI correctly recognized the brand/product |

| 50% | means the AI asked for clarification ("Which Copper do you mean?") |

| 0% | means the AI confidently gave the wrong answer (explained the common word) |

The Results

The "Alone" problem: Why ChatGPT Doesn't Recognise Your Brand

When users ask about a brand without context, AI brand recognition drops dramatically:

-

Honey: 0% on ChatGPT and Claude (AI explains bee honey)

-

Copper: 0% on ChatGPT and Claude (AI explains the metal)

-

Pitch: 0% on ChatGPT (AI explains sound frequency)

These aren't small startups. They've raised hundreds of millions of dollars combined. Yet ChatGPT doesn't mention them when users search.

Context Fixes (almost) Everything (But Users Don't Provide It)

With category context, recognition jumps to 100% on ChatGPT and Claude. The AI knows exactly what you're asking about.

The problem? Users don't naturally include context. They type "What is Honey?" not "What is Honey, the browser extension?". This is the core AI search optimisation challenge.

Different Models, Same Pattern

ChatGPT and Claude show nearly identical behavior: excellent AI brand recognition with context, poor without. Gemini 3 is more cautious, asking for clarification more often (resulting in more 50% scores), but this means users still don't get a direct answer about the brand.

Even Competitor Context Can Fail

Adding a competitor doesn't always help. When both the brand and competitor have common word names, confusion persists:

-

"Compare Copper vs Streak" resulted in ChatGPT asking for clarification (50%)

-

"Compare Grain vs Otter" had mixed results across models

What This Means for Brands: The New AI SEO

Generative Engine Optimization is the New SEO

As traffic shifts from traditional Google search to AI assistants, brand visibility in AI responses becomes critical. This isn't about ranking on page 1 anymore. It's about being the answer when ChatGPT, Claude, or Gemini responds to a user query.

This shift is sometimes called Generative Engine Optimization (GEO) or AI Search Optimization. Whatever you call it, the goal is the same: ensuring AI assistants recognize and recommend your brand.

The Hidden Cost of Poor AI Brand Visibility

Every time AI fails to recognize your brand:

-

A potential customer gets the wrong information

-

Your competitor might get mentioned instead

-

The user never discovers your product existed

How to improve your Brand Visibility in AI

-

Monitor your AI brand visibility across different prompt types and models

-

Identify context gaps where your brand fails without explicit context

-

Optimize your digital presence to strengthen brand signals that AI models can learn from

-

Track changes over time as models are updated and retrained

The brands that figure out AI search optimization first will have a significant advantage as more users shift from Google to AI assistants for product research and recommendations.

Methodology Notes

-

Models tested: ChatGPT (GPT-5.2), Claude (Sonnet 4.5), Gemini (3 Flash)

-

Test date: January 2026

-

Sample size: 5 brands, 3 variants each, 3 models = 45 data points

-

Limitations: Small sample size, English-language prompts only

This study is directional, not statistically significant. Results may vary based on prompt phrasing, model updates, and other factors.

Frequently Asked Questions

Which brands did we test?

We tested 5 brands across different categories and sizes: an established enterprise brand, two mid-market SaaS companies, one consumer brand with a common-name problem, and one emerging startup. The selection spans strong-entity and weak-entity brands to show how recognition signals affect AI visibility across model tiers.

What did we measure?

Three metrics per brand per AI model. First, appearance rate (does the brand surface when asked category questions?). Second, description accuracy (does AI describe the brand correctly?). Third, framing (is the brand positioned favorably, neutrally, or unfavorably relative to competitors?). Each brand was tested against 15-20 category queries per model.

How did results vary across ChatGPT, Claude, and Gemini?

Substantially. The same brand often appears with high confidence on one model and barely at all on another. ChatGPT tended to produce the most confident brand descriptions. Claude was most likely to hedge or qualify. Gemini was closest to Google search behavior. Variance between models was the biggest finding: you cannot treat AI visibility as one unified channel.

What was the biggest finding?

The gap between 100% recognition (with context) and 30% recognition (without context) for weaker-entity brands. When a user pastes brand context into a query, even low-recognition brands surface correctly. Without context, most AI models default to category names or better-known alternatives. The recognition gap is fixable through consistent third-party signals and entity reinforcement.

How should other brands interpret these results?

Treat your brand's AI visibility as a function of model-specific signals, not a single aggregate score. If ChatGPT gets you right and Gemini doesn't, the fix is probably different on each. Use this case study as a methodology template: run the same 3-metric framework on your own brand across the same 4 models.